Analyzing astronomical data with Apache Spark

par

Amphi Recherche

LPC

The volume of data recorded by current and future astrophysics experiments, and their complexity require a broad panel of knowledge in computer science, signal processing, statistics, and physics. Precise analysis of those data sets is a serious computational challenge, which cannot be done without the help of state-of-the-art tools. This requires sophisticated and robust analysis performed on many machines, as we need to process or simulate several times data sets. Among the future experiments, the Large Synoptic Survey Telescope (LSST) will collect terabytes of data per observation night, and their efficient processing and analysis remains a major challenge.

In this work, we investigate how to leverage Apache Spark to process and analyse future data sets in astronomy. We study the question first in the context of the FITS file format used across a wide range of astrophysical experiments. To this purpose we designed a data source API extension (spark-fits) to manipulate telescope images and astronomical tables with Apache Spark without performing data conversion, and we developed a new Apache Spark extension (spark3D) to manipulate 3D data sets and perform efficient queries: distribute 3D shapes, data sets join and cross-match, nearest neighbours search, spatial queries, and more.

We will then share experience in interfacing existing codes in astronomy with Apache Spark: the difficulties but also the gain in using such a framework compared to previous performances.

Finally I will introduce AstroLab Software (https://astrolabsoftware.github.io), a project aiming at providing advanced software tools to overcome modern science challenges faced by research groups.

This seminar will be accessible to non-astronomers.

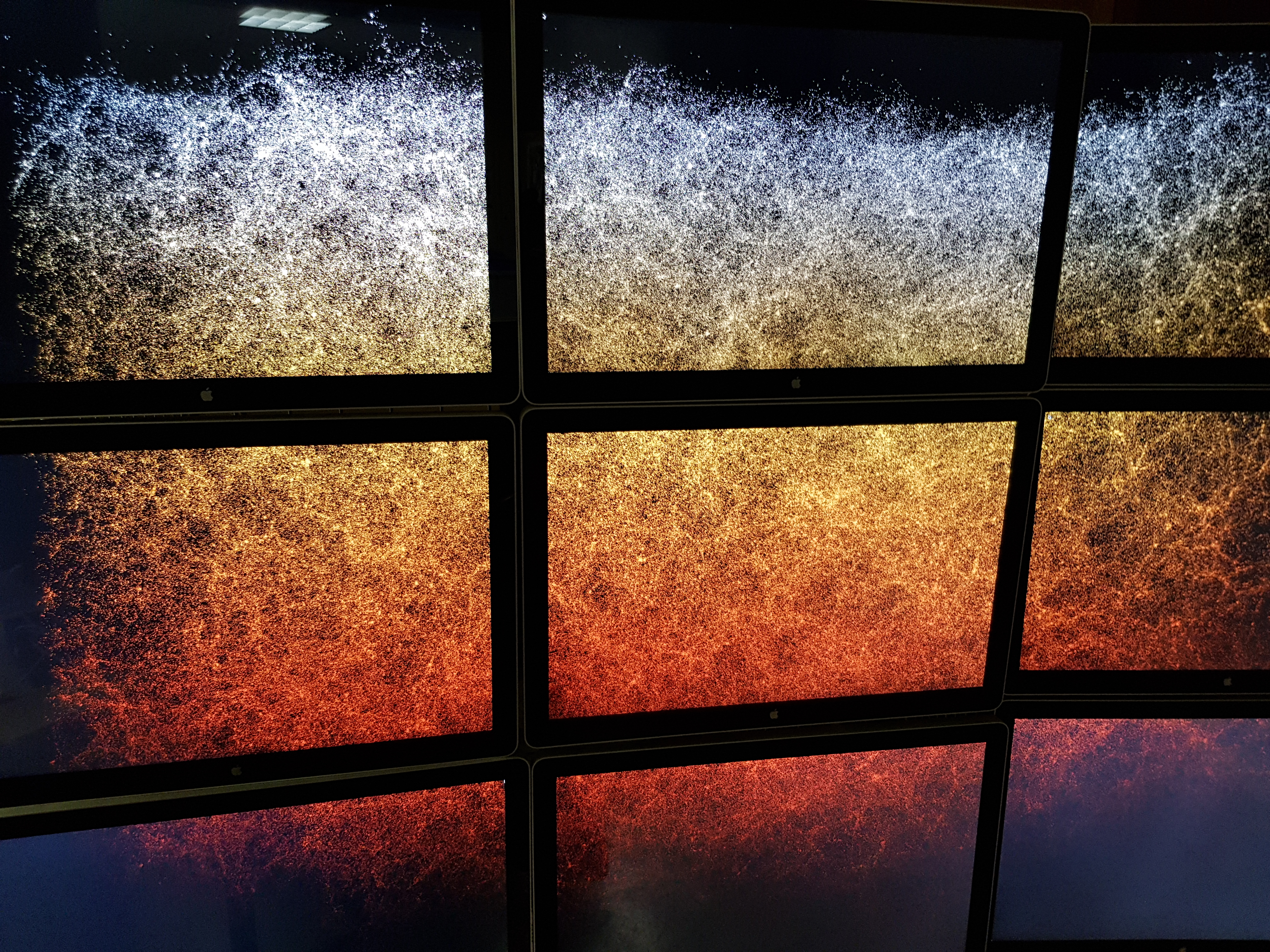

3.4 millions simulated galaxies from LSST DC2 data. Redshift between 1 (white) and 1.2 (black). Data extracted with LAL/Spark from a 11 GB catalog parquet file. It is displayed on the 3x3 LAL little wall of screens.